Making the illustrations for "Founding is a Snowball"

A couple months ago I published a sort of “children’s book for adults” called Founding is a Snowball. While the story is cute, what made it compelling were the accompanying illustrations. People were curious how I put them together so here are some of the details together so you can try for yourself.

At a High Level

I made the illustrations with Nano-banana.1

I had GPT-5.2 describe reference images in words to make them more editable and portable.

Overly verbose, expressive image prompts used to be required, but modern models seem to find them distracting. I had better results telling them to speak clearly and concisely, the way you would to a person.

I defaulted to generating everything at least three times to get a sense of what was a prompt problem and what was model variance.

The total cost for the post was about $12.

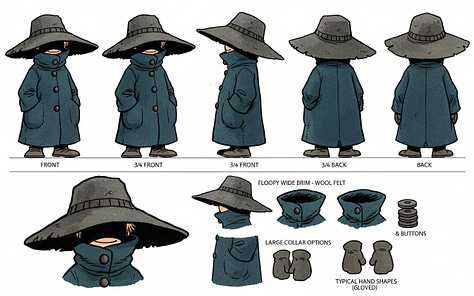

Creating the Character

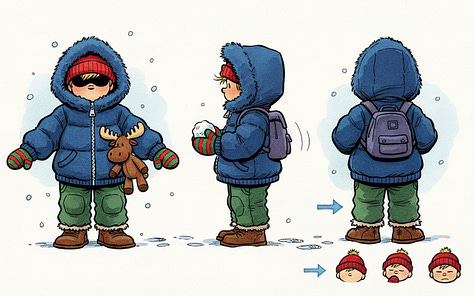

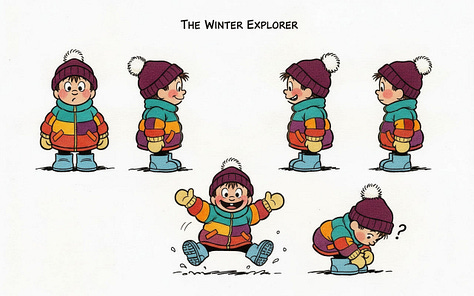

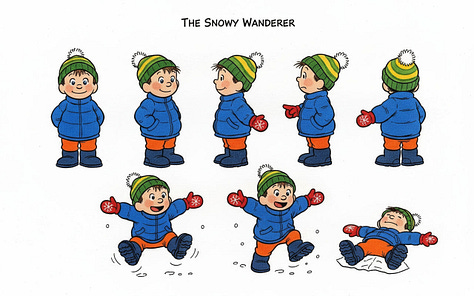

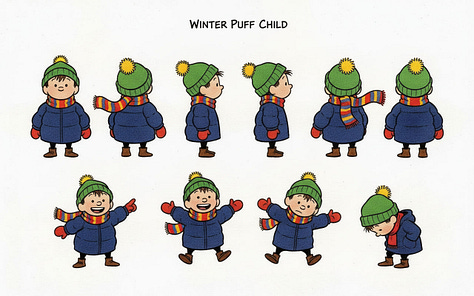

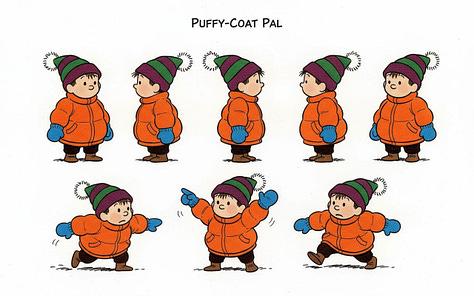

In the first round of character generations I ended up with this which I liked quite a bit. It became the canonical reference which Nano-banana dubbed Hat-coat Wanderer.

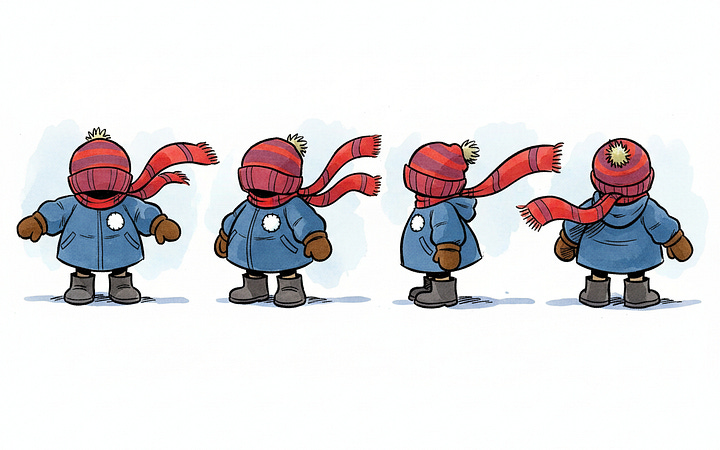

I got pretty high variance for images. Here are some rejected examples:

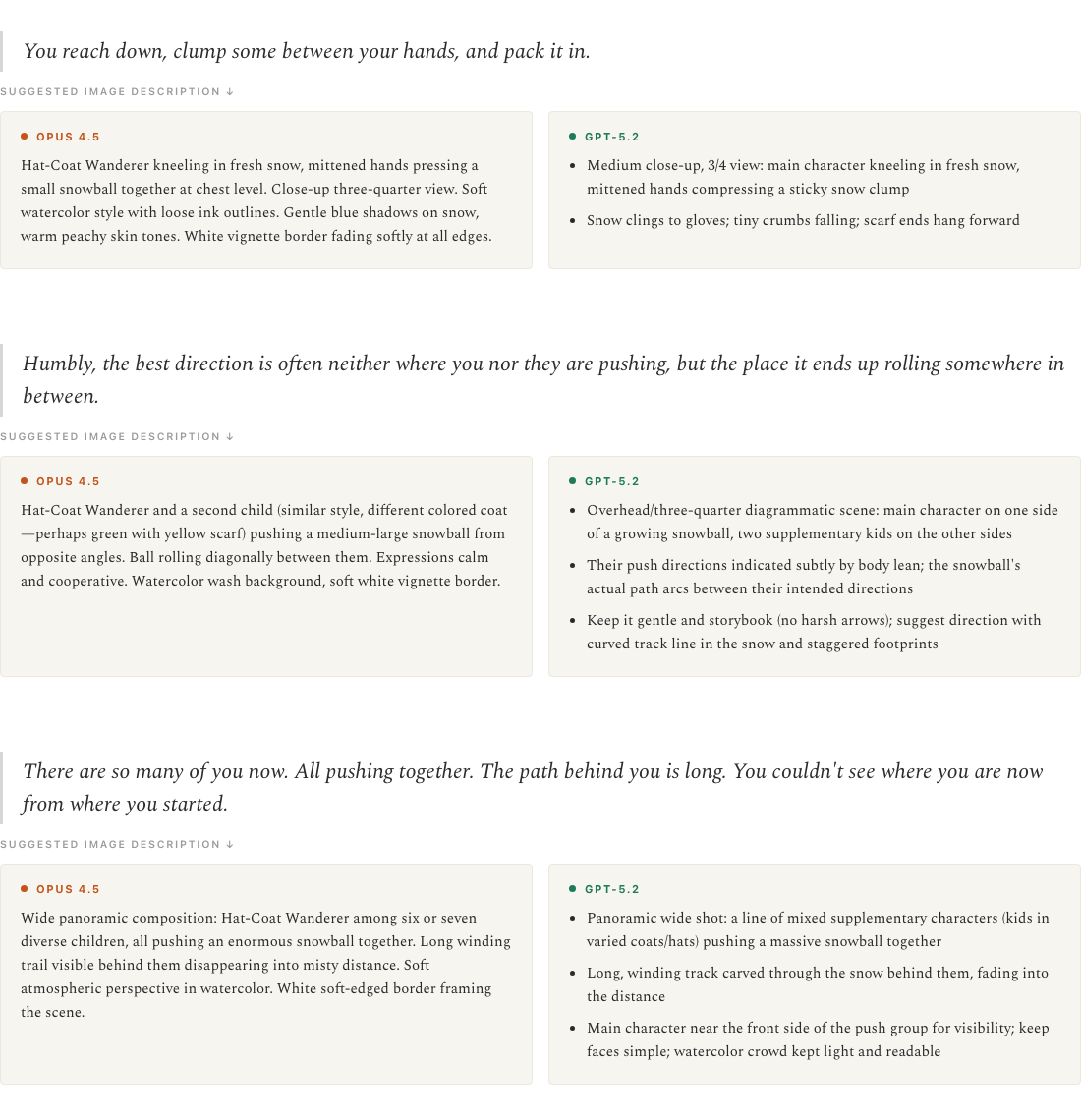

Specifying Illustrations

Next I chose the lines in the story to illustrate. I wanted to pace them evenly throughout the story, highlighting emotionally salient scenes. At first, I tried to generate each description individually but they all sounded the same. The scenes that I got from generating them all at once were much better quality. I didn’t know which would be better, so I tried both Opus 4.5 and GPT-5.2.

I wasn’t able to just use either’s responses wholesale. For some scenes, I combined the best parts of both descriptions. Other times I tweaked toward something the model was able to generate better. There were also scenes where I had something in mind already and wanted to see if the model agreed.

Generating the Illustrations

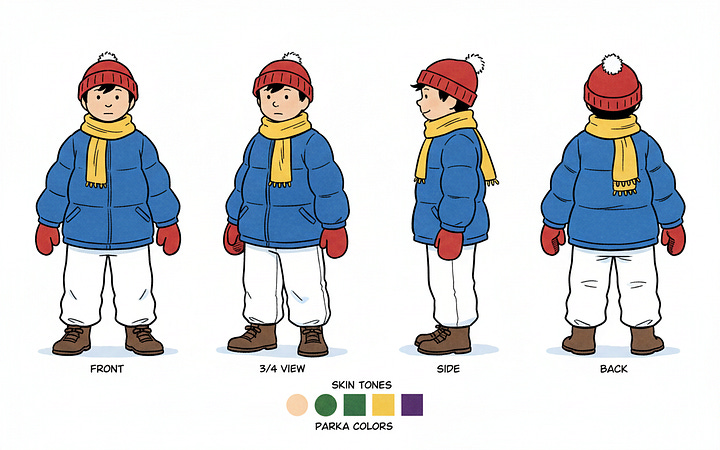

Each generation received:

a chunk of general style text describing the characters, world, and art style

a sample image from the references

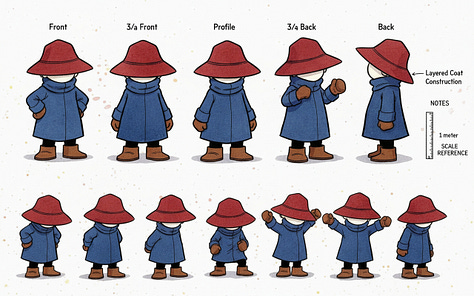

the character turnaround for any characters in the scene

the specific scene description

There was a surprising amount of variability in these. Here are a bunch of samples for the opening illustration. Some of that variance was failing to adhere to the prompt and other was because I under-specified what I was looking for.

Even then, I rarely one-shotted the illustration I ended up using. They usually required edits. One clear example is the background of the opening image. Once I found the pose and framing I liked, I removed the original background that I found too heavy and replaced it with something lighter.2

Places the Model Struggled

The models have gotten so much better. Edit models have been a step-wise improvement, and Nano-banana specifically seems like the breakthrough that makes consistency possible. However there were still gaps where I was fighting a tool, rather than working with it a collaborator.

Creating a second character

The turnaround for the second character was a delicate dance of trying to extract the essence of the art style without grabbing too much of the specific character. I wanted someone individually recognizable, rather than the main character color swapped.

I tried a few different tactics to brainstorm how some characters might differ: height, weight, skin color, hair color, different kinds of hats, jackets and other winter gear. The tactics that got the LLM brainstorming effectively were very similar to the ones I’d built into Imaginator.

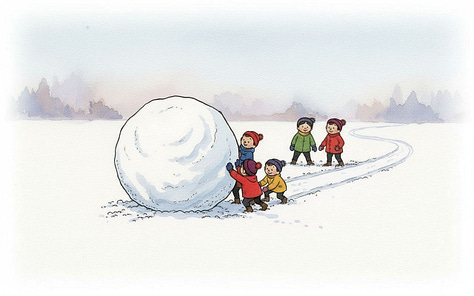

Placing characters relative to each other

For the line “Humbly, the best direction is often neither where you nor they are pushing, but the place it ends up rolling somewhere in between,” Nano-banana really wanted to put the characters on opposite sides of the snowball, but the line calls for characters pushing at slightly different angles. Their combined vector needs to still be going somewhere for the story to make sense.

I tried a host of different ways to describe this: descriptions, angle measurements, even a diagram.

Eventually, I got lucky. Even then, the snowball path never made sense. I had to edit it in afterwards.

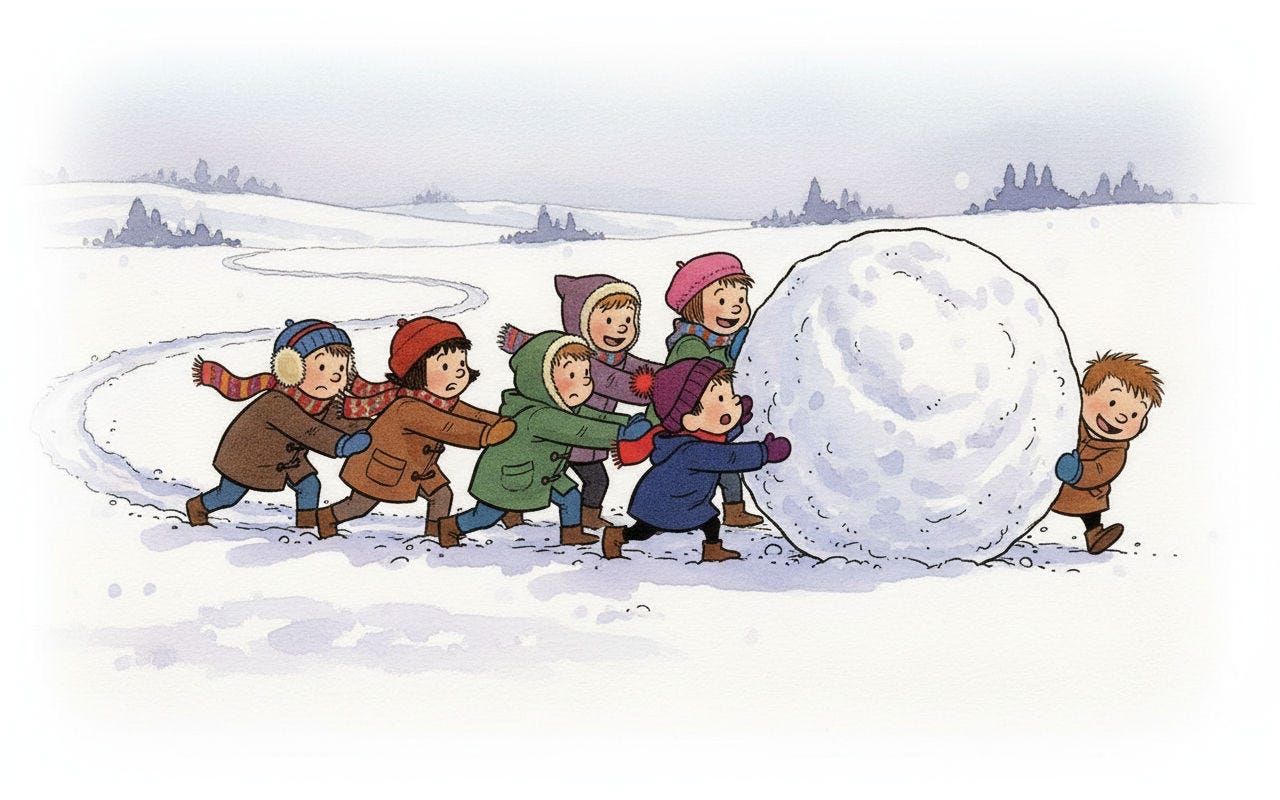

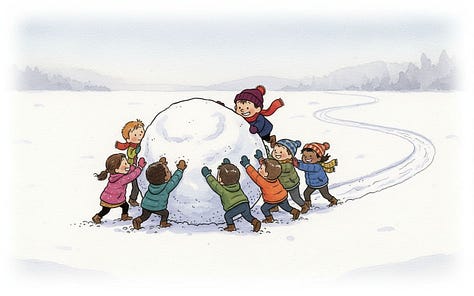

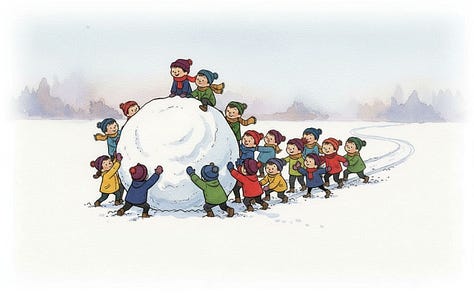

Many children pushing

It was comically hard to get a crowd pushing the ball. The model lost any pretense of where the ground was. These characters are either climbing on the ball or floating in space.

It was easy to make a crowd, but it was hard to get a quality one. Generating the crowd all at once was better than adding more children sequentially as edits. Edits led to the same character being added in multiple places, or the style or scale of the characters drifting and looking out of place.

While I was finally able to get them pushing in one direction, I’m not sure how effective their strategy would actually be.

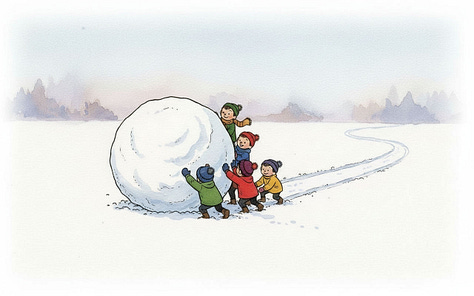

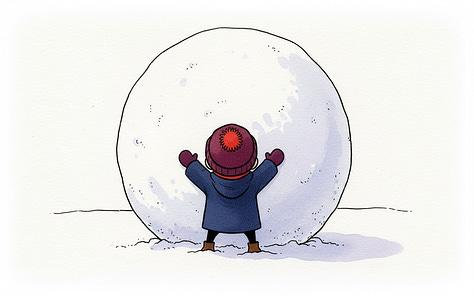

A really big snowball

Nano-banana consistently produced a snowball that was about twice the size of the character. I tried a handful of strategies like “a massive snowball that fills most of the frame” or “the size of an office building” and edits like “Make the snowball 10 times the characters size”.

In the end the bit of hyperbole that got me there was “Make the character tiny so the snowball looks like a planet!”

Conclusion

One commenter on Hacker News captured it perfectly:

I know some people are going to be upset at model generated illustrations. But I think the alternate is probably, no illustrations. There’s a lot of unnecessary AI image slop all around and most add no value or worse makes you just avoid reading the content by their awfulness. This was done really well and I am not sure I would have read it fully without it.

Even as someone who grew up doodling in all of my notebooks in school, I don’t know if I would ever have crafted illustrations with enough quality to be ready to publish this. I certainly would not have hired someone. But if I keep getting better at this, maybe for a future project, I can.

I’m not sure if these illustrations will stand the test of time or if they’ll feel dated like Stable Diffusion or the animation from the original Shrek. Regardless of how timeless the work is, I’m thankful that something that came out of my head reached people in a positive way.

Nano-banana-pro didn’t give meaningfully better results and was much more expensive; Nano-banana-2 wasn’t out at the time. All of the models in this article might be out of date now. It’s amazing how fast this all moves!

If I were to go back and update the piece, I would spend more time on the backgrounds creating more consistency in content and style